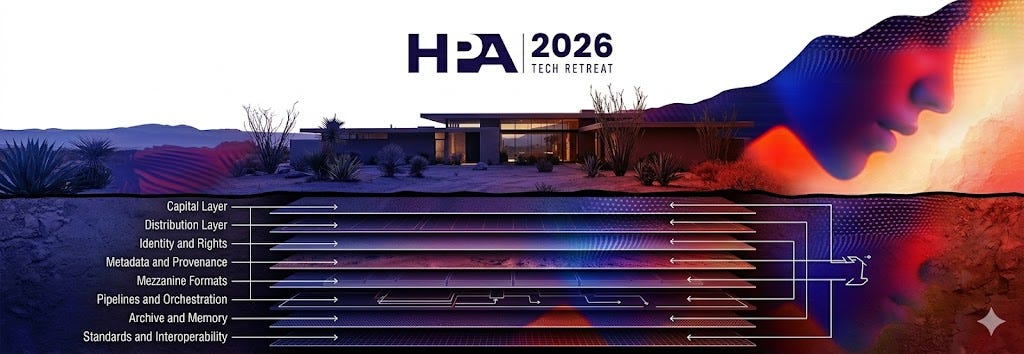

HPA Wasn’t About AI Tools. It Was About Operating Systems.

What the 2026 Tech Retreat revealed about budgets, roadmaps, and the infrastructure layer.

Note: Due to a platform glitch on Friday, this post was not published as planned. This time we are posting it for free for all to enjoy! Apologies if you already got it in your inbox.

I had the pleasure of attending the 2026 HPA Tech Retreat in Rancho Mirage in February. Same desert. Same single-track format. Same mix of studio executives, VFX supervisors, production technologists, cloud vendors, and the standards people who still care about the plumbing.

What changed wasn’t the setting. It was the layer of the stack everyone was focused on.

As this publishes, Netflix has walked away from its bid for Warner Bros. Discovery while Paramount Skydance moves forward. I’m still formulating my thoughts on what that means for consolidation, leverage, and long-term control of IP. I’ll write about that separately. But the timing is not incidental.

Capital is reorganizing at the top of the industry. Production pipelines are being re-architected underneath it. HPA sat squarely in the seam between th…