The Middle Layer Is Becoming Strategic

Mezzanine Formats in the Age of Inference and Context

In a recent sidebar, Dave Gullo explored what happens when television shifts from a finished product to something composed at runtime. When viewers can influence camera angles, commentary, overlays, and framing, the experience becomes dynamic.

That shift feels like an interface change. It is not.

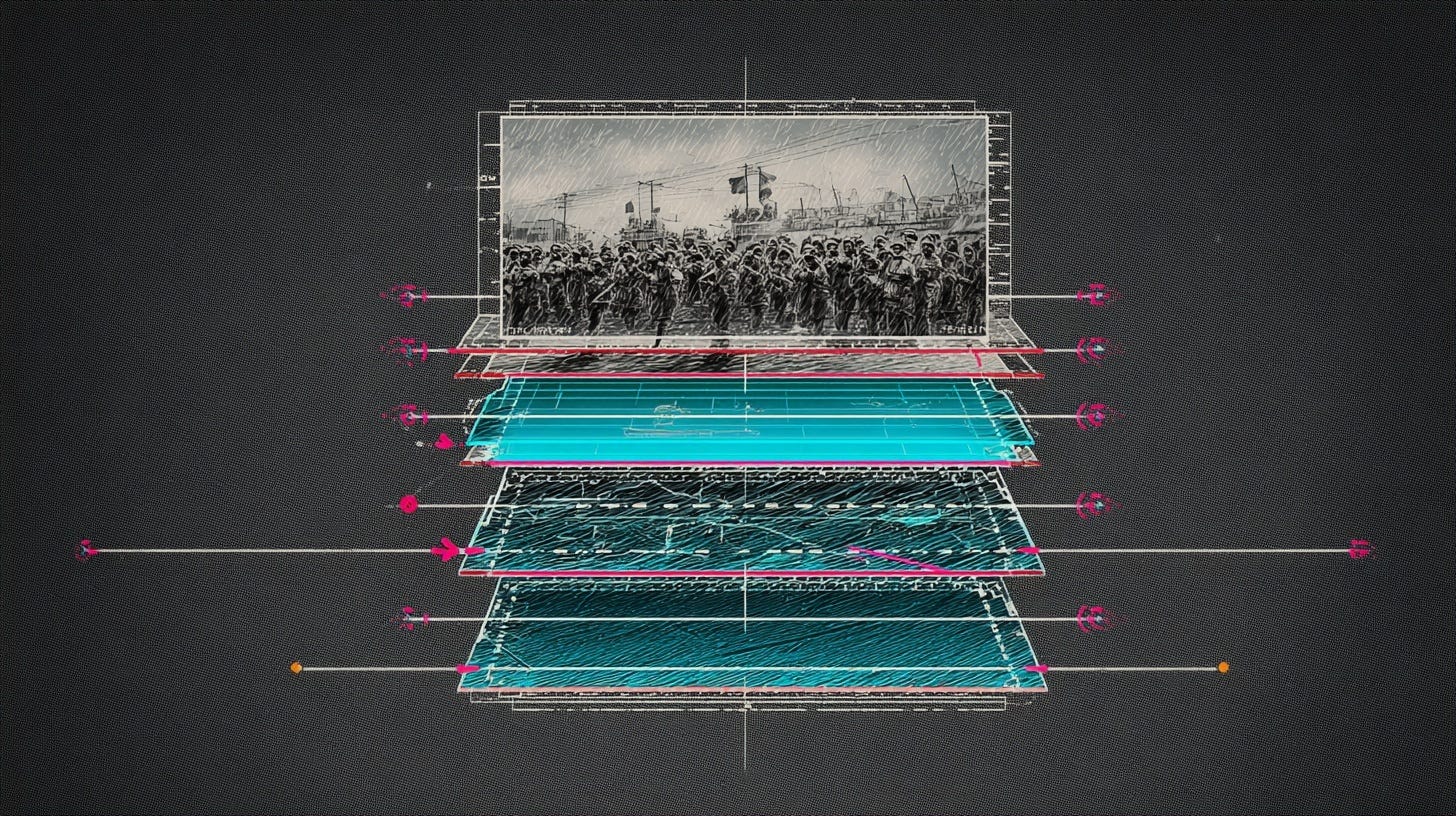

It changes the expectations placed on the infrastructure underneath. If composition becomes dynamic, the layer between capture and delivery can no longer act as neutral transport. It must support inference, automation, and structured decisioning. That brings the mezzanine layer into focus.

And this does not apply only to live. The same pressure is building inside library and archival workflows. The difference is not speed. It is direction. In live, inference happens in motion. In archives, inference happens at scale. In both cases, the middle layer becomes the surface systems read.

What a Mezzanine Format Actually Does

Most people outside engineering circles never think about mezzanine formats. They sit between camera originals and delivery encodes. They are the working format inside production pipelines.

In live environments, mezzanine formats move signals across IP networks, feed replay systems, keep switching responsive, and preserve enough quality for real-time transformation.

In library environments, mezzanine formats sit at the center of ingest, editing, versioning, localization, compliance review, restoration, and distribution preparation. They are the master working copies that archives are built around.

It is the version you operate on. Historically, mezzanine decisions were made around three variables: visual quality, latency, and compute cost. In live, latency dominated. In archives, storage efficiency and editability mattered more. Across both, the primary downstream consumer was a human.

That assumption no longer holds.

Why JPEG XS Became the Default

JPEG XS emerged during the broadcast transition from SDI to IP. The industry needed a format that behaved like uncompressed video without consuming uncompressed bandwidth. XS delivered visually transparent compression, extremely low latency, minimal computational overhead, and predictable behavior inside ST 2110-22 environments.

It required almost no workflow retraining. Vendors adopted it quickly. SMPTE alignment reinforced it. Interop events validated it. OB trucks, replay systems, and routing architectures standardized around it.

It fit the problem of that moment which was replacing SDI without destabilizing operations. At the same time, archival and post-production environments were standardizing around a different class of mezzanine formats. ProRes and DNxHR became common working masters inside editing and finishing pipelines. JPEG 2000 found a long life in digital cinema packaging and preservation workflows. In some archive environments, AVC-Intra or high-bitrate H.264 served as pragmatic mezzanine proxies where storage efficiency mattered more than real-time transport.

Those formats were optimized for editability, quality retention, and long-term manageability. They were not built for IP routing at scale. And they were not designed with real-time inference in mind. They solved the dominant archival problems of their time: preserving visual fidelity, surviving multiple generations of re-encoding, and keeping storage costs within reason.

In both broadcast and archive contexts, mezzanine formats won because they reduced friction in the workflows that mattered most. JPEG XS reduced friction in IP transport. ProRes and DNx reduced friction in post. JPEG 2000 reduced friction in digital cinema mastering and preservation.

Standards win when they align with the dominant constraint of the moment. But those moments were defined by transport stability, editing efficiency, and storage economics, not by inference, automation, or dynamic composition. That constraint is changing.

The Constraint Has Changed

The mezzanine layer is no longer just moving video between humans.

In live systems, it increasingly supports real-time AI inference, automated framing and cleanup, highlight extraction, speech-to-text pipelines, localization triggers, and dynamic composition engines. The signal passing through the middle is not only routed and viewed. It is being analyzed and used to drive decisions in real time.

In archival systems, the same shift is happening at scale. Large libraries are indexed with computer vision. Faces are detected and clustered. Scenes are segmented. Objects are identified. Logos are flagged for rights review. Legacy footage is upscaled, reframed, and reformatted for new platforms. Compliance and policy checks operate automatically across entire catalogs.

Across both contexts, systems require more context about the content flowing through them. They need to understand what is present in a frame, how it evolves over time, and how it connects to adjacent frames and external data sources. The mezzanine format becomes the substrate that either preserves or erodes that usable context.

Once context becomes the central requirement, mezzanine formats are no longer judged solely by visual fidelity or latency. They are evaluated by how well they preserve analytic stability, metadata alignment, and long-term structural integrity.

That shift has consequences for live production, archival transformation, and standards evolution.

Below, we examine what changes when machines become first-class consumers of the signal and how that reframes the role of mezzanine formats across the stack.